Every once in a while, we’re going to use this space to just brag about something awesome. We hope you don’t mind! Yesterday, Where Shadows Slumber was featured in the Today tab on the App Store as the “Game of the Day.”

Every day, the App Store Game editors pick a game they feel deserves a moment in the spotlight. The impact it had on sales was pretty severe, as we’ll show in our upcoming financial tell-all blog post. But more importantly, it’s a nice ego boost to some struggling indie devs. Someone out there cares! Thanks, Apple.

Unfortunately, you can only read the official article if you’re on an iPhone or iPad. So just to make sure everyone gets a chance to read it, I’ve copied the text below as best as I could. What follows is the full text of our “Game of the Day” article, which was written entirely by staff at Apple and not by us. How cool is that?!

Most games treat darkness as a threat. In the melancholy Where Shadows Slumber, shadows shed light on perplexing spatial puzzles.

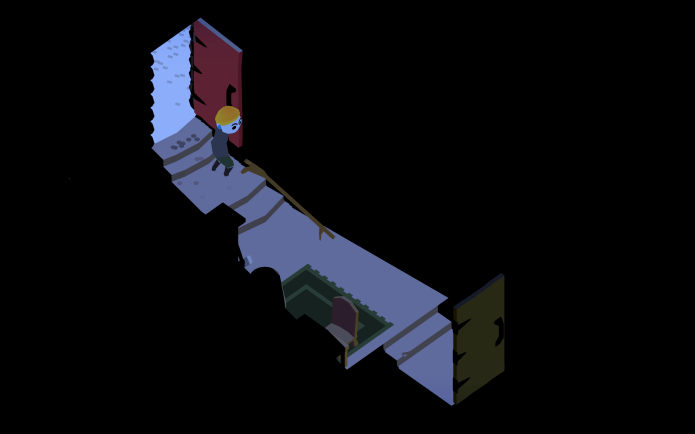

Magically repair a broken bridge by passing the right combination of light and shadow over it.

You’re Obe, an old man equipped with a mystical lantern that can change and morph each mazelike level. As you progress, objects and other light sources create shadowy patterns. Activating switches causes objects to slide and move, altering the location of the shadows and revealing previously unseen paths – and dangers.

Obe eventually has to coax other characters to press buttons and switches. It ramps up quickly; within a few levels, you’ll have your hands full puzzling out the right patterns to open the way forward. It’s typically brief, however. Where Shadows Slumber is filled with aha moments when the solution suddenly becomes evident. You won’t be stuck in the dark for long.

What’s wrong with all these people? It gets pretty creepy.

With an evocative animation style and stark use of color, Where Shadows Slumber paints a dreary, beautiful picture. A strange, rhythmic soundtrack amps up the tension, while brief, menacing cutscenes featuring mean-spirited beasts give glimpses into the game’s mysterious narrative. Where is Obe headed, anyway?

It’s all a bit hazy. But while the story is deliberately ambiguous, Where Shadows Slumber’s unique, shadow-manipulating mechanic shines clear as day. Step into the light.

We’re so thankful to the staff at Apple for featuring our game. Please share this article with your friends if they haven’t purchased the game yet – what more proof do you need that our game is awesome?!

See you next time!

= = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = = =

Where Shadows Slumber is now available for purchase on the App Store, Google Play, and the Amazon App Store.

Find out more about our game at WhereShadowsSlumber.com, ask us on Twitter (@GameRevenant), Facebook, itch.io, and feel free to email us directly at contact@GameRevenant.com.

Frank DiCola is the founder of Game Revenant and the artist for Where Shadows Slumber.